In the Age of AI, a Giant Glowing Poster Speaks Louder Than a Neural Net

The artificial intelligence boom has delivered many things: soaring valuations, lavish conferences, and a collective hallucination that reality only counts when mediated through a screen. But as thousands of executives flocked to yet another financial services convention this year—badged, badged again, and Bluetooth-tracked—we made a different kind of bet.

While everyone else chased attention inside the browser, we took a more literal route: the wall.

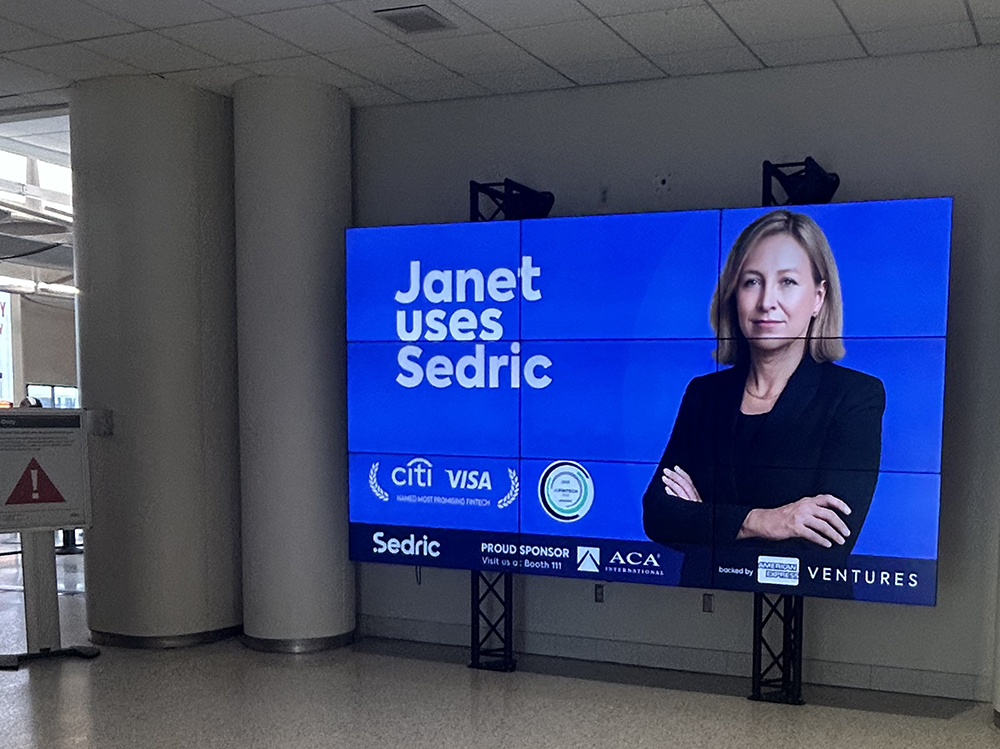

We were advertising Sedric (where I helm as VP Marketing), a company that builds AI-powered compliance platforms, the old-fashioned way. Not through high-cost low-ROI LinkedIn banners or other digital noise, but through sheer physical presence. I bought media at the airport. I staked a massive glowing digital banner on the convention center—directly in the approach of the on-coming foot traffic from the sponsor hotel. And at the heart of the event, we planted a floor-to-ceiling digital monolith at the main entrance of the exhibit hall—a display so large it made the official conference signage look like directional Post-it notes.

And it worked.

Against the Grain

Conventional wisdom in the AI space says the smartest way to market is through smarter algorithms. Target. Retarget. Personalize. Automate. You’re not marketing unless your funnel has a funnel.

But conventional wisdom is often wrong. When they zig, you gotta zag!

What digital advertising gains in precision, it loses in memory. The average human sees between 4,000 and 10,000 ads per day, most of them algorithmically served and instantly forgotten. What they don’t forget is the thing they had to crane their necks to see.

That’s exactly what happened with Sedric’s presence at the event.

Scene One: Baggage Claim Bravado

Our campaign began long before the attendees reached the venue. At the airport, as soon as conference attendees arrived en masse and exited the secure area at the airport, they were greeted by a massive sign, “Janet Uses Sedric.” Then again a few standing signs and again at the baggage claim.

No jargon. No generative promises. Just a short memorable statement. More than one person told us later that this was their first encounter with the brand—before they opened the conference app, before they walked into the hotel. The message registered, not because it was targeted, but because it was unavoidable. More than a dozen would ask us who “Janet” is and what Sedric is all about.

Scene Two: The Pavement Plays Its Part

Outside the convention center, most brands were busy buying pixels. We bought concrete. A series of Sedric-branded installations lined the walkways—bold, self-assured, almost austere in their restraint. They didn’t shout. They stood. And in doing so, they created a physical rhythm. A drumbeat of visual authority that led attendees from curb to check-in desk.

Scene Three: The Tower

Then came the showstopper: a floor-to-ceiling digital ad at the entrance to the exhibition hall. Twenty feet tall, placed dead center, with a clear and deliberate message.

While most companies splintered their branding across 10-by-10 booths and awkward giveaways, we went vertical. Our display loomed over the exhibit floor, casting a long shadow—literally and metaphorically—over lesser signage. This wasn’t marketing. It was architectural intent.

People took photos in front of it. They posted about it. And they remembered it.

Results You Can’t Scroll Past

The payoff came quickly—and tangibly. Even before the conference began, attendees were snapping pictures of our airport banners and sending them, unsolicited, to our CEO with messages of praise. Full disclosure: it was a great feeling for me to kick off the conference with such an enthusiastic validating response from our CEO! Booth visitors arrived saying, “I saw your billboard and had to ask what Sedric is.” My presentation on the Innovation Stage drew a full house with dozens more standing on the back and sides—not because they knew us from LinkedIn, but because they saw us from the sidewalk. Nearly a hundred conversations were influenced by the media blitz. And a thousand conference attendees (and envious competitors) saw the Sedric brand loud and clear. We did nearly 50 demos over a day and a half—not bad for a startup.

After the event, existing customers reached out to our customer success team, unprompted, to share photos and praise and say they’d seen our massive messages displayed at the venue. One billboard. Hundreds of conversations. Thousands of impressions. The campaign generated more meaningful dialogue than months of sponsored content could have hoped to. And not a single chatbot was required.

The Irony of the Moment

In a year dominated by talk of large language models and autonomous agents, it was oddly satisfying to hear constant chatter about our brand—not because a new Agentic AI told them to, but because a massive screen did.

In marketing, there’s a term called “category capture”—the moment when a brand becomes synonymous with a problem space. We didn’t need AI to generate that moment. We needed space, scale, and the nerve to defy the trend.

Lessons from the Analog Edge

The AI industry talks incessantly about disrupting the old playbook. But sometimes, the oldest plays still work—especially when no one else is running them.

Physical presence is the most underutilized lever in tech marketing today. In a landscape obsessed with “reach,” we chose resonance. In a digital arms race, we brought a billboard.

And as it turns out, when everyone zigs toward hyper-targeted AI ads, the most strategic move might just be a really, really big zag.